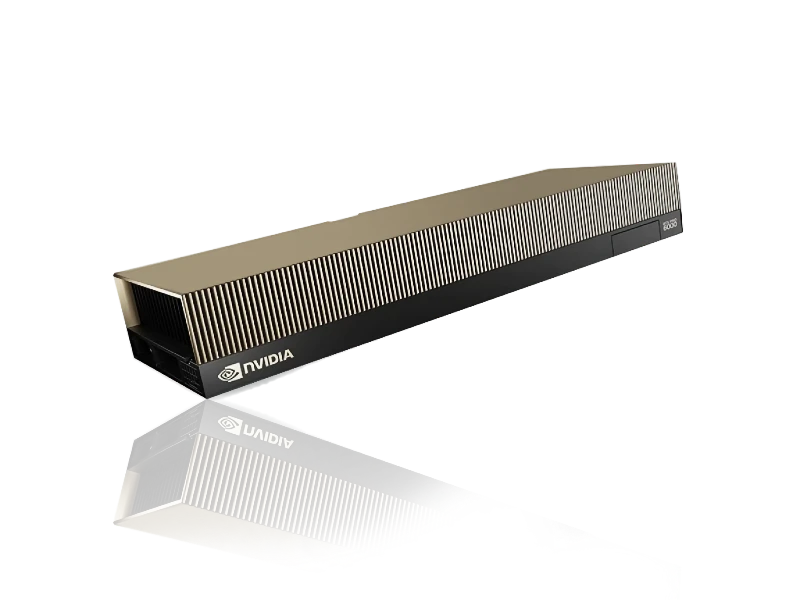

GPU Clusters - NVIDIA B200

64 nodes B200 | USA | Available Apr 15

Unrivaled throughput for Foundation Model Training, Fine-Tuning, and Massive-Scale Inference. B200 inventory is available for long-term lease or hardware purchase.

64 nodes B200 | USA | Available Apr 15

Unrivaled throughput for Foundation Model Training, Fine-Tuning, and Massive-Scale Inference. B200 inventory is available for long-term lease or hardware purchase.

↳ Your business needs GPU capacity. We connect you with the world’s best GPUaaS providers through our five-point GPU promise.

↳ Your business needs GPU capacity. We connect you with the world’s best GPUaaS providers through our five-point GPU promise.

↳ Your business needs GPU capacity. We connect you with the world’s best GPUaaS providers through our five-point GPU promise.

We source GPU from a network of wholesale GPU cloud providers. You can get low-latency sovereign infrastructure almost anywhere. Just tell which GPU locations and compliances you need.

The GPU ecosystem is being kept complex for a reason - every marketplace and broker adds their % to the cost. Skip their premiums: we bring you direct to wholesale providers and cut out unnecessary fees.

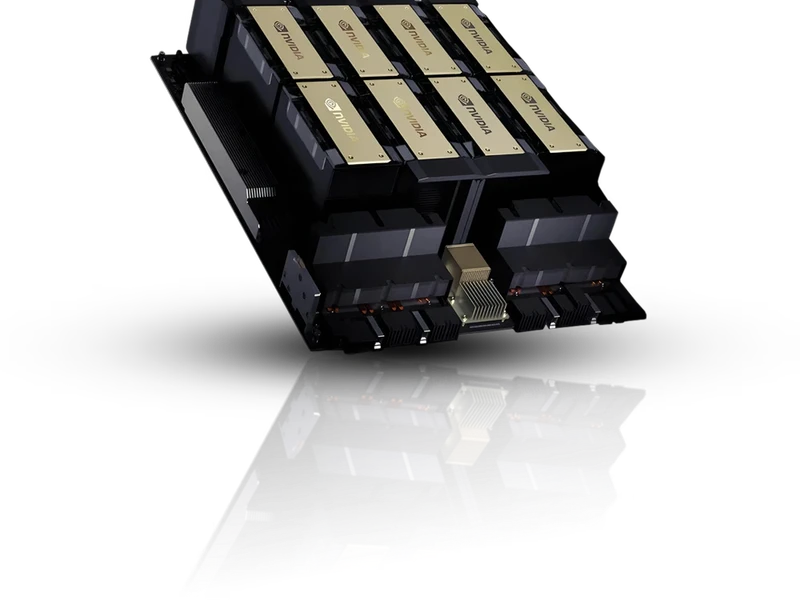

Total GPUs: 64 × NVIDIA B200

Servers: water-cooled Supermicro GPU servers

Host CPUs: AMD Genoa (EPYC series)

Storage Tier: ~6PB NVMe

Storage Servers: Supermicro AS-1115HS-TNRAMD EPYC 9454 (Genoa)2×200G NVIDIA ConnectX-7 NIC

Storage Network:Arista 7060X5 switches

Backend Network (Training – 32 nodes):Celestica DS5000 switches

800GB Ethernet fabric

RDMA over Converged Ethernet (ROCE)

Frontend Network: 200GB connectivity via BlueField-3 DPUs

NIC Configuration:Training servers:8× NVIDIA ConnectX-7 (200GB)

BlueField-3 DPU (dual-port 200GB)

Inference servers (96):BlueField-3 DPU (dual-port 200G)

Management Network: Dedicated 1G port on all nodes

Kubernetes Management Nodes (3): Supermicro AS-2125HS-TNR (2U) AMD EPYC 9384X (Genoa) 64GB DDR5

RAM2×100G Broadcom BRCM57508 NIC

Orchestration: Dedicated Kubernetes cluster

Separate control + data plane

Gateway & Security: Isolated network segmentation

Load balancing + firewall layer

We connect you with vetted GPU infrastructure providers: wholesale operators with a wide range of GPUs available now.

Our network of GPUaaS providers is global. You can get low-latency sovereign GPU infrastructure almost)anywhere. If specific locations or certifications are important to you, just tell when you ask for a quote.

Why pay mark-up on GPU resources? We connect you to wholesale GPU service providers, and elastic GPU resources that cost up to 30% less than hyperscale. Avoid unnecessary fees. Get better terms and SLAs too.

Rent short-term or long-term GPU for your projects. You don't have to lock in to a multi-year contract. Choose PAYG or a commitment term for optimal pricing: it’s up to you.

Reserved pricing: $4.20/GPU/hr

10% upfront to secure allocation. If you’re planning large training runs or scaling inference in Q2 — this is a good window to lock capacity.

Start simple – how many GPUs or nodes and what type – then add as much detail as you like. Inference or training. Model architecture. Precision. Virtualization type. Budgets and timelines.

We do the legwork, and find providers with capacity that fits your need. Our network includes:

When we’ve found the perfect partner for your project, you’ll get quotations for the GPU you need, usually within a few hours.

We’ll smooth your ride through the provisioning process, and you can get on with your project.

Rent high-performance NVIDIA GPU clusters for ML, GenAI, rendering, simulation and development. Get DGX-class infrastructure at market-leading rates.

Gold standard HGX clusters for large scale training, tuning, inference

Ultra-efficient Blackwell clusters for mid-scale AI, visualization, ML

The industry standard for AI training and inference at scale

GPUaaS.com is a free service from hosted·ai. We've developed a unique software platform that makes GPU infrastructure way more efficient for GPUaaS providers.

The wholesale providers in our network use our platform to maximize GPU utilization, so they can deliver GPU resources to you at ~30% lower cost. As hosted·ai partners, we know they meet rigorous standards for uptime, performance, support and SLAs. You get the same GPUs and the same performance you'd get from hyperscalers or neoclouds, but without their retail mark-up.

Got more questions?

Contact us